Tesla's Latest FSD Beta Doesn't Seem Ready For Public Use, Which Raises Big Questions

If someone tells you FSD drives better than a human, do not let that person drive you anywhere

I know we write a lot about Tesla's semi-automated driving systems, known both, quite deceptively, as Autopilot and Full Self-Driving. And why shouldn't we? These emerging technologies are a big deal, and we're all in an interesting transition period, as the widespread use of automated driver-assist systems is becoming A Thing for the first time. Tesla's systems tend to get the most attention, because, you know, Tesla, and also the fact that they're being actively tested on public roads.

We're now starting to see videos from the just-released FSD Beta 10.3, just like we saw videos of previous releases. And, like every other release, the results are a mix of impressive and concerning. There's a new video of a drive in a Tesla equipped with Beta 10.3, and it's interesting for a number of reasons, so let's watch it and discuss some things, why not?

Here's the video, from Tesla owner and YouTuber Out of Spec Reviews:

What I like about this test is that it presents a very good mix of everyday, normal driving situations in an environment with a good mix of traffic density, road complexity, lighting conditions, road markings, and more. In short, reality, the same sort of entropy-heavy reality all of us live in and where we expect our machines to work.

There's a lot that FSD does that's impressive when you consider that this is an inert mass of steel and rubber and silicon that's effectively driving on its own through a crowded city. We've come a long way since Stanley the Toureg finished the DARPA Challenge back in 2006, and there's so much to be impressed by.

At the same time, this FSD beta proves to be a pretty shitty driver, at least in this extensive test session.

Anyone arguing that FSD in its latest state drives better than a human is either delusional, high from the fumes of their own raw ardor for Elon Musk or needs to find better-driving humans to hang out with.

FSD drives in a confusing, indecisive way, making all kinds of peculiar snap decisions and generally being hard to read and predict to other drivers around them. Which is a real problem.

Drivers expect a certain baseline of behaviors and reactions from the cars around them. That means there's not much that's more dangerous to surrounding traffic than an unpredictable driver, which this machine very much is.

And that's when it's driving at least somewhat legally; there are several occasions in this video where traffic laws were actually broken, including two instances of the car attempting to drive the wrong way down a street and into oncoming traffic.

Nope, not great.

In the comments, many people have criticized Kyle, the driver/supervisor, for allowing the car to make terrible driving decisions instead of intervening. The reasoning for this ranges from simple Tesla-fan-rage to the need for disengagements to help the system learn, to concern that by not correcting the mistakes, Kyle is potentially putting people in danger.

They're also noting that the software is very clearly unfinished and in a beta state, which, is pretty clearly true as well.

These are all reasonable points. Well, the people just knee-jerk shielding Elon's Works from any scrutiny aren't reasonable, but the other points are, and they bring up bigger issues.

Specifically, there's the fundamental question about whether or not it makes sense to test an unfinished self-driving system on public roads, surrounded by people, in or out of other vehicles, that did not agree to participate in any sort of beta testing of any kind.

You could argue that a student driver is a human equivalent of beta testing our brain's driving software, though when this is done in any official capacity, there's a professional driving instructor in the car, sometimes with an auxiliary brake pedal, and the car is often marked with a big STUDENT DRIVER warning.

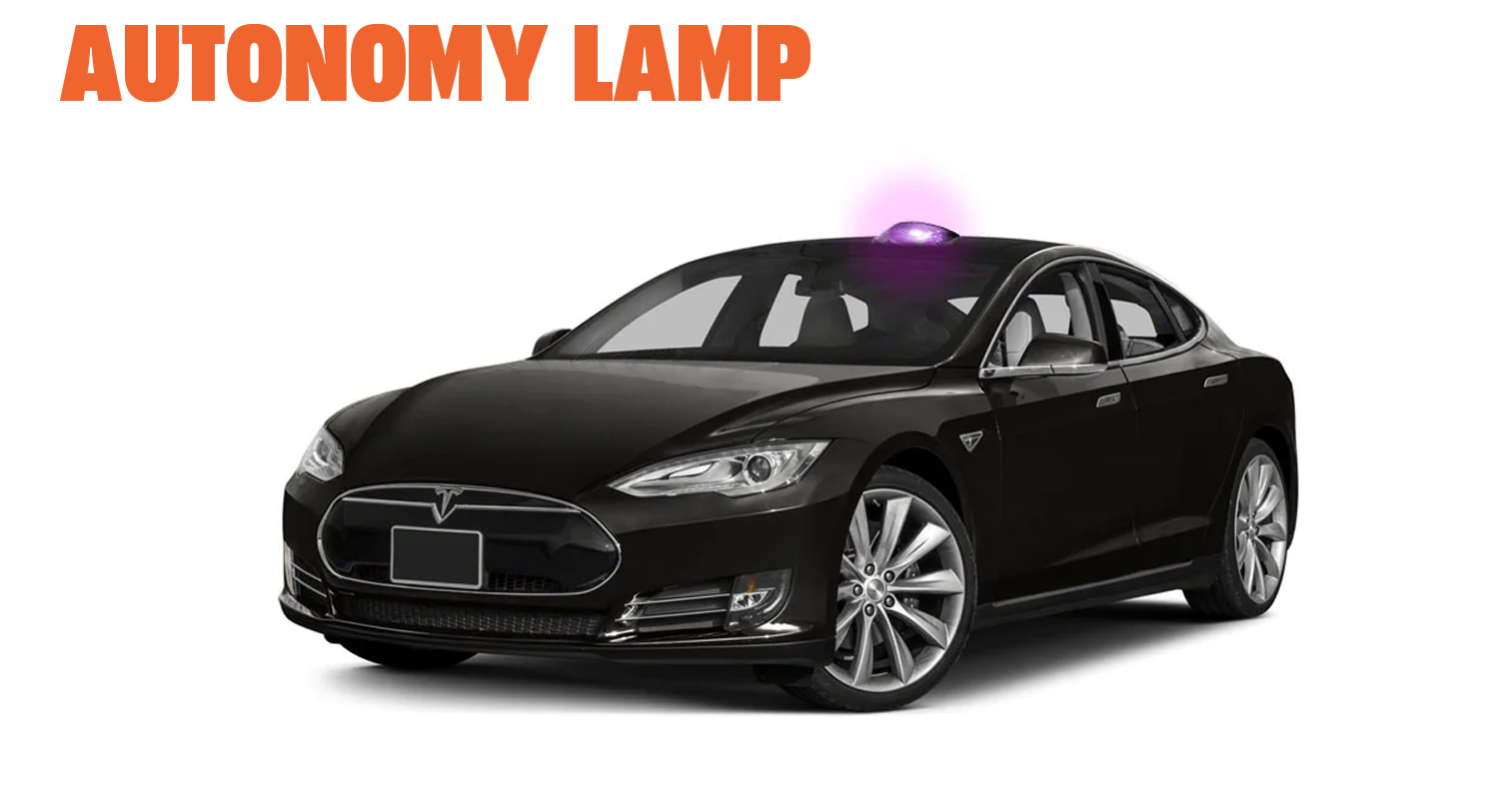

I've proposed the idea of some kind of warning lamp for cars under machine control, and I still think that's not a bad idea, especially during the transition era we find ourselves in.

Of course, in many states, you can teach your kid to drive on your own without any special permits. That context is quite similar to FSD beta drivers since they don't have any special training beyond a regular driver's license (and no, Tesla's silly Safety Score does not count as special training).

In both cases, you're dealing with an unsure driver who may not make good decisions, and you may need to take over at a moment's notice. On an FSD-equipped Tesla (or really any L2-equipped car), taking over should be easy, in that your hands and other limbs should be in position on the car's controls, ready to take over.

In the case of driving with a kid, this is less easy, though still possible. I know because I was once teaching a girlfriend of the time how to drive and had to take control of a manual old Beetle from the passenger seat. You can do it, but I don't recommend it.

Of course, when you're teaching an uncertain human, you're always very, very aware of the situation and nothing about it would give you a sense of false confidence that could allow your attention to waver. This is a huge problem with Level 2 semi-automated systems, though, and one I've discussed at length before.

As far as whether or not the FSB beta needs driver intervention to "learn" about all the dumb things it did wrong, I'm not entirely sure this is true. Tesla has mentioned the ability to learn in "shadow mode" which would eliminate the need for FSD to be active to learn driving behaviors by example.

As far as Kyle's willingness to let FSD beta make its bad decisions, sure, there are safety risks, but it's also valuable to see what it does to give an accurate sense of just what the system is capable of. He always stepped in before things got too bad, but I absolutely get that this in no way represents safe driving.

At the same time, showing where the system fails helps users of FSD have a better sense of the capabilities of what they're using so they can attempt to understand how vigilant they must be.

This is all really tricky, and I'm not sure yet of the best practice solution here.

This also brings up the question of whether Tesla's goals make sense in regard to what's known as their Operational Design Domain (ODD), which is just a fancy way of saying "where should I use this?"

Tesla has no restrictions on their ODD, as referenced in this tweet:

When the whole world is your ODD, it's hard to validate your SW as safe to release

Real AV cos don't operate in limited ODDs because they are behind Tesla. They do it because they value safety unlike Tesla

Specific ODDs are critical for safety at this early stage of AV dev pic.twitter.com/ZR4hNdAxuA

— AutonoDriver (@AutonoD) October 27, 2021

This raises a really good point: should Tesla define some sort of ODD?

I get that their end goal is Level 5 full, anywhere, anytime autonomy, a goal that I think is kind of absurd. Full Level 5 is decades and decades away. If Tesla freaks are going to accuse me of literally having blood on my hands for allegedly delaying, somehow, the progress of autonomous driving, then you'd think the smartest move would be to restrict the ODD to areas where the system is known to work better (highways, etc) to allow for more automated deployment sooner.

That would make the goal more Level 4 than 5, but the result would be, hopefully, safer automated vehicle operation, and, eventually, safer driving for everyone.

Trying to make an automated vehicle work everywhere in any condition is an absolutely monumental task, and there's still so so much work to do. Level 5 systems are probably decades away, at best. Restricted ODD systems may be able to be deployed much sooner, and maybe Tesla should be considering doing that, just like many other AV companies (Waymo, Argo, and so on) are doing.

We're still in a very early transition period on this path to autonomy, however that turns out. Videos like these, that show real-world behavior of such systems, problems and all, are very valuable, even if we're still not sure on the ethics of making them.

All I know is that now is the time to question everything, so don't get bullied by anyone.