Tesla's AI Day Event Did A Great Job Convincing Me They're Wasting Everybody's Time

Level 2-assisted driving, especially in-city driving, is worse than useless. It's stupid.

Tesla's big AI Day event just happened, and I've already told you about the humanoid robot Elon Musk says Tesla will be developing. You'd think that would have been the most eye-roll-inducing thing to come out of the event, but, surprisingly, that's not the case. The part of the presentation that actually made me the most baffled was near the beginning, a straightforward demonstration of Tesla "Full Self-Driving." I'll explain.

The part I'm talking about is a repeating loop of a sped-up daytime drive through a city environment using Tesla's FSD, a drive that contains a good amount of complex and varied traffic situations, road markings, maneuvering, pedestrians, other cars—all the good stuff.

The Tesla performs the driving pretty flawlessly. Here, watch for yourself:

Now, technically, there's a lot to be impressed by here— the car is doing an admirable job of navigating the environment. The more I watched it, though, the more I realized one very important point: this is a colossal waste of time.

Well, that's not entirely fair: it's a waste of time, talent, energy, and money.

I know that sounds harsh, and it's not really entirely fair, I know. A lot of this research and development is extremely important for the future of self-driving vehicles, but the current implementation—and, from what I can tell, the plan moving ahead—is still focusing on the wrong things.

Here's the root of the issue, and it's not a technical problem. It's the fundamental flaw of all these Level 2 driver-assist, full-attention required systems: what problem are they actually solving?

That segment of video was kind of maddening to watch because that's an entirely mundane, unchallenging drive for any remotely decent, sober driver. I watched that car turn the wheel as the person in the driver's seat had their hand right there, wheel spinning through their loose fingers, feet inches from those pedals, while all of this extremely advanced technology was doing something that the driver was not only fully capable of doing on their own, but was in the exact right position and mental state to actually be doing.

What's being solved, here? The demonstration of FSD shown in the video is doing absolutely nothing the human driver couldn't do, and doesn't free the human to do anything else. Nothing's being gained!

It would be like if Tesla designed a humanoid dishwashing robot that worked fundamentally differently than the dishwashing robots many of us have tucked under our kitchen counters.

The Tesla Dishwasher would stand over the sink, like a human, washing dishes with human-like hands, but for safety reasons you would have to stand behind it, your hands lightly holding the robot's hands, like a pair of young lovers in their first apartment.

Normally, the robot does the job just fine, but there's a chance it could get confused and fling a dish at a wall or person, so for safety you need to be watching it, and have your hands on the robot's at all times.

If you don't, it beeps a warning, and then stops, mid-wash.

Would you want a dishwasher like that? You're not really washing the dishes yourself, sure, but you're also not not washing them, either. That's what FSD is.

Every time I saw the Tesla in that video make a gentle turn or come to a slow stop, all I could think is, buddy, just fucking drive your car! You're right there. Just drive!

The effort being expended to make FSD better at doing what it does is fine, but it's misguided. The place that effort needs to be expended for automated driving is in developing systems and procedures that allow the cars to safely get out of the way, without human intervention, when things go wrong.

Level 2 is a dead end. It's useless. Well, maybe not entirely—I suppose on some long highway trips or stop-and-go very slow traffic it can be a useful assist, but it would all be better if the weak link, the part that causes problems—demanding that a human be ready to take over at any moment—was eliminated.

Tesla—and everyone else in this space—should be focusing efforts on the two main areas that could actually be made better by these systems: long, boring highway drives, and stop-and-go traffic. The situations where humans are most likely to be bad at paying attention and make foolish mistakes, or be fatigued or distracted.

The type of driving shown in the FSD video here, daytime short-trip city driving, is likely the least useful application for self-driving.

If we're all collectively serious about wanting automated vehicles, the only sensible next step is to actually make them forgiving of human inattention, because that is the one thing you can guarantee will be a constant factor.

Level 5 drive-everywhere cars are a foolish goal. We don't need them, and the effort it would take to develop them is vast. What's needed are systems around Level 4, focusing on long highway trips and painful traffic jam situations, where the intervention of a human is never required.

This isn't an easy task. The eventual answer may require infrastructure changes or remote human intervention to pull off properly, and hardcore autonomy/AI fetishists find those solutions unsexy. But who gives a shit what they think?

The solution to eliminating the need for immediate driver handoffs and being able to get a disabled or confused AV out of traffic and danger may also require robust car-to-car communication and cooperation between carmakers, which is also a huge challenge. But it needs to happen before any meaningful acceptance of AVs can happen.

Here's the bottom line: if your AV only really works safely if there is someone in position to be potentially driving the whole time, it's not solving the real problem.

Now, if you want to argue that Tesla and other L2 systems offer a safety advantage (I'm not convinced they necessarily do, but whatever) then I think there's a way to leverage all of this impressive R&D and keep the safety benefits of these L2 systems. How? By doing it the opposite way we do it now.

What I mean is that there should be a role-reversal: if safety is the goal, then the human should be the one driving, with the AI watching, always alert, and ready to take over in an emergency.

In this inverse-L2 model, the car is still doing all the complex AI things it would be doing in a system like FSD, but it will only take over in situations where it sees that the human driver is not responding to a potential problem.

This guardian angel-type approach provides all of the safety advantages of what a good L2 system could provide, and, because it's a computer, will always be attentive and ready to take over if needed.

Driver monitoring systems won't be necessary, because the car won't drive unless the human is actually driving. And, if they get distracted or don't see a person or car, then the AI steps in to help.

All of this development can still be used! We just need to do it backwards, and treat the system as an advanced safety back-up driver system as opposed to a driver-doesn't-have-to-pay-so-much-attention system.

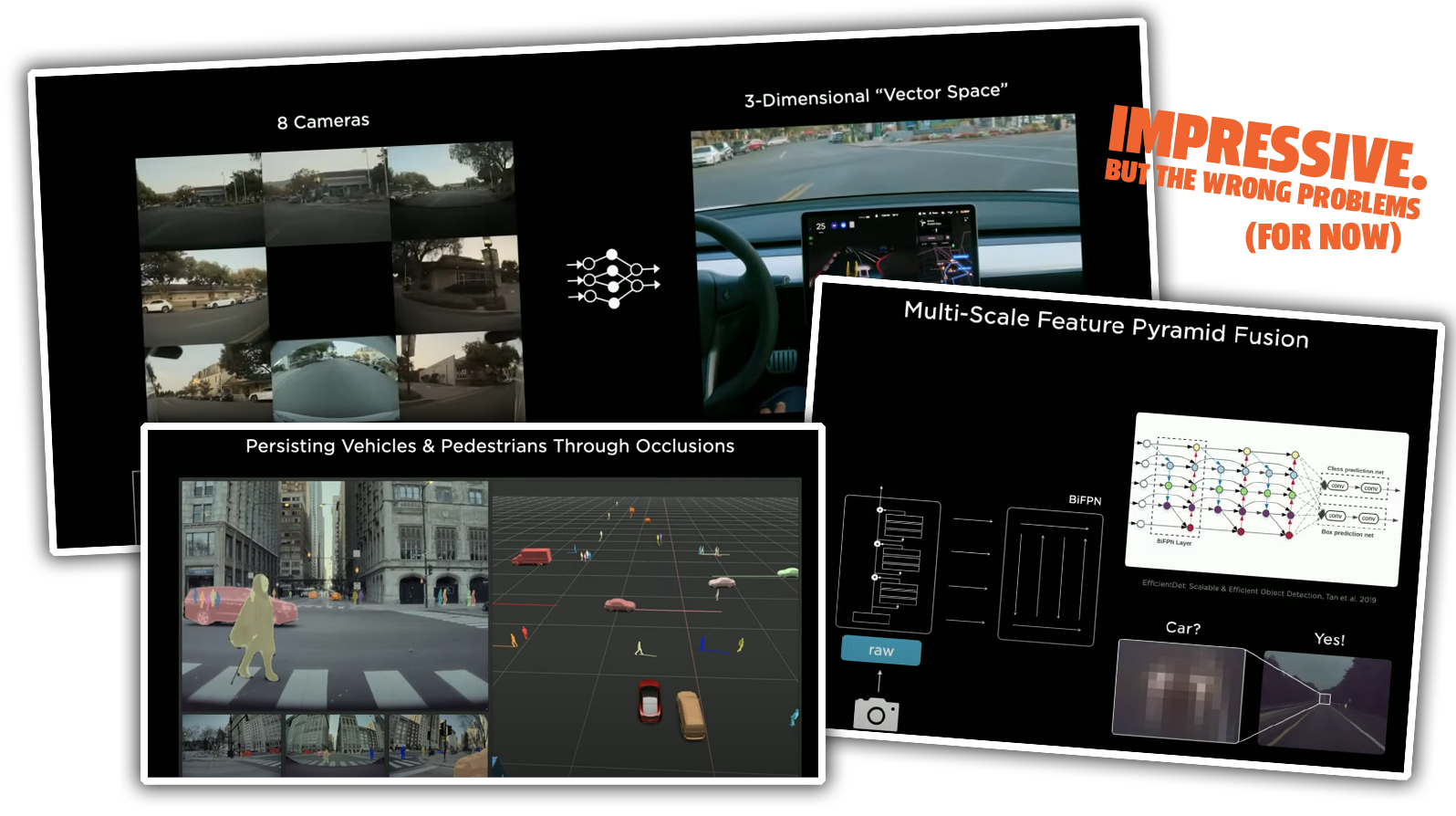

Andrej Karpathy and Tesla's AI team are incredibly smart and capable people. They've accomplished an incredible amount. Those powerful, pulsating, damp brains need to be directed to solving the problems that actually matter, not making the least-necessary type of automated driving better.

Once the handoff problem is solved, that will eliminate the need for flawed, trick-able driver monitoring systems, which will always be in an arms race with moron drivers who want to pretend they live in a different reality.

It's time to stop polishing the turd that is Level 2 driver-assist systems and actually put real effort into developing systems that stop putting humans in the ridiculous, dangerous space of both driving and not driving.

Until we get this solved, just drive your damn car.