Confusion Between Self-Driving Cars And Driver-Assistance Systems Is Still Rampant: Study

The modern car market is in a period of transition as semi-autonomous features migrate onto it, and not in the joyous, life-is-about-to-change-forever kind of way. It's more like it just hit middle school, and a bunch of terrible, miserable things no one understands are happening to it.

The news now is that the Insurance Institute for Highway Safety recently asked more than 2,000 drivers about current driver-assistance systems on the market, and found that a lot of them have no clue what the capabilities of the systems are.

There is, as Jalopnik contributor Tom McParland describes it, "a dangerous combo of deceptive marketing, imperfect tech, and a lack of critical thinking from the consumer" on the market right now. "Imperfect tech" is the nice way to put it, considering the mess that apparently is General Motors' self-driving Cruise division and the fact that testing self-driving cars can be fatal.

In the context of that, IIHS asked people about a few different level-two driver-assistance systems on the market right now. There are five levels of automation, with level five being self driving under any conditions, and level-two systems requiring a driver to stay actively engaged and alert to what's going on.

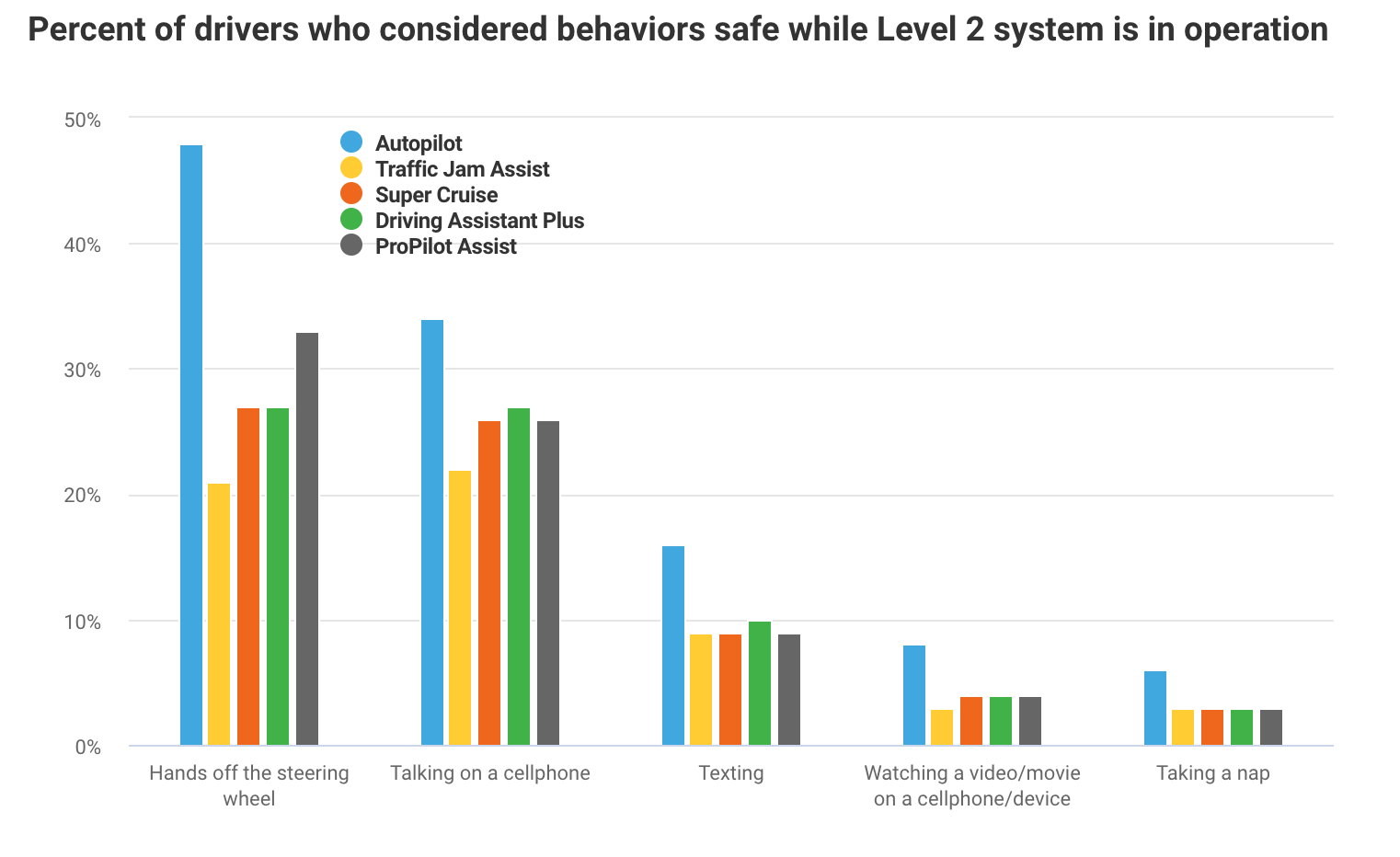

IIHS found that a lot of people weren't aware that's the case. Here's a chart of what people thought would be acceptable to do while operating various level- two technology that's currently on the market, and it isn't great:

The five systems IIHS asked about, as shown above, were Tesla's Autopilot, Audi and Acura's Traffic Jam Assist programs, Cadillac's Super Cruise, BMW's Driving Assistant Plus and Nissan's ProPilot Assist. IIHS said people were only given the names of the systems, not the automakers that use each of them.

IIHS found that, as anyone could've guessed, the "Autopilot" name led people to think that they could pay less attention at the wheel—which isn't the case. (It doesn't help that Tesla describes its cars to have "full self-driving capability," because people can gloss over that and assume the car has things covered.)

But the study found that it goes deeper than just the names of the systems, and into how automakers display the driver-assistance information on instrument clusters. IIHS said it had 80 people watch videos from a driver perspective in a 2017 Mercedes-Benz E-Class—a car that Mercedes, by the way, advertised in 2017 as "self driving" when it only has driver-assistance features. Mercedes later pulled the ad, but not before adding to the confusion about autonomy.

Half of the 80 participants got a short orientation to the cluster icons in the car, but IIHS said it didn't make everyone an expert. They were far from it, actually:

In the study, certain key pieces of information eluded many of the participants. While almost everyone was able to understand when adaptive cruise control had adjusted the vehicle speed or detected another vehicle ahead, most participants, regardless of whether they received the training, struggled to understand what was happening when the system didn't detect a vehicle ahead because it was initially beyond the range of detection.

Most of the people who didn't receive training also struggled to identify when lane centering was inactive. In the training group, many people got that right. However, even in that group, participants often couldn't explain why the system was temporarily inactive.

IIHS has more on its findings here.

Right now, cars are in that awkward, indefinite stage between being operated by humans and operating on their own. While technology and car companies would like everyone to believe that self-driving cars will be on the market any day now—hence the pulled Mercedes ad—they won't, because the technological and regulatory hurdles are lined up further than the eye, or lidar system, can see.

But when it comes to figuring out driver-assistance technology while carmakers and tech companies navigate those many, many hurdles to get actual self-driving cars onto showroom floors, those of you actually on the market for a new car can all take some advice from your middle-school selves on this one: If you don't know what's going on, just google it.

Update: Thursday, June 20, 2019 at 4:10 p.m. ET: In regards to the Autopilot findings by IIHS, Tesla sent CNET an emailed statement to say that the results are "not representative of the perceptions of Tesla owners or people who have experience using Autopilot, and it would be inaccurate to suggest as much."

"If [the] IIHS is opposed to the name 'Autopilot,' presumably they are equally opposed to the name 'Automobile,'" the statement said, according to CNET. It's a ridiculous argument by Tesla in favor of the name, given that the connotations of "autopilot" and "automobile" are on completely different planets.

"Tesla provides owners with clear guidance on how to properly use Autopilot, as well as in-car instructions before they use the system and while the feature is in use," the statement said.