Video Shows Ford's CoPilot 360 Has Some Of The Same Driver Monitoring Issues We've Seen On Tesla Autopilot

I've been sent this video numerous times since I went on the YouTube show of a raging, dead-eyed ghoul who drives a Passat and has an all-encompassing love for Elon Musk in the void where his soul once resided, sent by hardcore Tesla devotees who feel the semi-autonomous systems of other carmakers aren't getting the same scrutiny as Tesla's Autopilot. They do have a point — all Level 2 semi-automated systems on the market are worthy of covering, so let's look at Ford's Co-Pilot360 system in the Mustang Mach-E. There are issues here, but even more important are the bigger questions they raise.

This particular video is made by Minimal Duck, a YouTuber with what appears to be a very good dog and a lot of Tesla enthusiast videos. The tone of the video is very clearly pro-Tesla and is very eager to disparage Ford's system, but the essential actions and behaviors of Co-Pilot360 are shown accurately, which is all I really care about, in this context.

Anyway, here, you can watch for yourself:

Before we talk about the issues shown in the video, let's clarify what Co-Pilot 360 is. An earlier version of this was unclear and used some incorrect terminology, but I've spoken with a Ford PR rep to clarify.

BlueCruise, Ford's upcoming Level 2 semi-automated system, will be available in the third quarter of this year, and will be more comparable to Tesla's Autopilot or GM's Supercruise.

BlueCruise can operate in a hands-free mode on pre-qualified sections of highway, and in this case driver monitoring is accomplished by a set of cameras facing the driver, set on the steering column at about the same location that column-shifted automatic cars mounted the PRNDL indicator.

What was seen on the car in the video is part of the Co-Pilot 360 suite of tools, and includes a combination of adaptive cruise control, lane centering, speed sign recognition and what Ford calls Stop-and-Go, which is designed for, as the name suggests, stop-and-go traffic.

Based on the SAE levels chart, the system meets basic Level 2 requirements by being a simultaneous lane centering and dynamic cruise setup.

The system seen in the video (again, not BlueCruise) appears to be less advanced than Tesla's Autopilot, and doesn't appear to do things like compensating speed for curves and other more advanced semi-automated behaviors. As such, it's positioned and marketed much more as a driver assist system, with minimal or no claims of actual self-driving, as suggested by the overarching "co-pilot" branding.

That's a good thing, as there are no currently available full self-driving systems on the market, and confusion about that has been one of the major issues with Tesla's Autopilot and Full Self-Driving systems. Overconfidence in these systems has led to all kinds of irresponsible and dangerous behaviors and incidents over the past few years.

The big issues that are pointed out in this video are that the Co-Pilot360 system does not disengage when it detects a seat belt unbuckled, and the system also does not appear to check to see if there is any weight in the driver's seat.

Of these, I think the more serious one is the lack of checking to see if the driver's seat is occupied when the semi-automated systems are active. This is a problem with Ford and Tesla, and the lack of a simple weight sensor is a big factor in why all those idiotic driverless Tesla viral videos on Tik Tok and other social media platforms are a thing.

Ford, like Tesla, should fix this significant oversight, or they'll soon be seeing similar videos of idiots misusing their semi-automated system, and we'll have to report on those, and then I'll get pissed-off Ford stans up in my shit, too.

This one also feels remarkably easy to solve — cars already have weight sensors in passenger seats to determine if the airbag will be disabled or not. Hell, my old '73 Beetle has a simple pressure switch to determine if it should turn on the incredibly grating 'fasten seat belts' buzzer and light. This isn't hard.

The one that got me thinking more, though, is the seat belt issue. In a Tesla, if you unlatch the driver's side seat belt, Autopilot will disengage, which is why to fool the system to leave the seat, a determined moron (of which supplies are seemingly plentiful) would need to latch the belt, then just sit on it, like seemingly everybody used to do back in the 1970s.

When Consumer Reports demonstrated that Tesla Autopilot can be fooled, they had to do this as well. At first, it seems to make sense that you'd want to cut off any driver assist systems if the seat belt was undone while driving, but then I started thinking about this more.

I know I've taken off my seat belt while driving (including at higher speeds) before, and, when I think about why I've done it, almost every time it's been for the same reason: it's winter, I got into the car with a jacket on because it was cold, and then the car's interior eventually heated up and I was hot, so, I wanted to take off my jacket.

Usually this process involves waiting until you get to a stretch of relatively straight and empty road, undoing your seat belt, furtively struggling it off while you steer with your knees, then flinging it into the back or passenger's seat before re-buckling your belt and getting back to driving normally.

I'd wager that almost everyone reading this has done the same sort of thing at some point in their driving lives, likely many times. Doing this isn't exactly smart. In fact, strictly speaking, it's a terrible idea — you're giving up a good bit of control of the car for the few seconds you're wrestling that jacket off, and if something unexpected were to happen in those seconds, you'd likely be pretty boned — especially because you aren't belted in during the period where you're driving is most compromised.

I don't have statistics for this, but I'm sure there's been crashes as a result of jacket-removal or belt-off-to-grab-something-from-the-floor or any number of similar sorts of momentary driver lapses that we've all been guilty of, at some point.

So this raises a big question: isn't this exactly the kind of situation where a driving-assist system would provide the most benefit?

Again, I'm not saying this is smart behavior, taking your hands off the wheel, removing your seat belt to yank off a jacket — but it's behavior we know human beings do, all the time. I'm inclined to think driver assist systems should be technologies that are inherently forgiving of our bad ideas, systems that have our back.

This is a subtle but important marketing and positioning distinction among Level 2 driver-assist systems: do we think of them as systems that drive the car and we need to monitor, or are we driving the car, and the systems are there to help when we make mistakes.

I feel like Tesla has been positioning Autopilot as the first option there, the car driving, the driver hovering, waiting to take over. This introduces all manner of trouble relating to the vigilance problem I've talked about before, and Lex Fridman's study that suggests Tesla AP drivers all stay vigilant has real problems and doesn't come close to dismissing this very real issue.

Moving forward, for Level 2 systems that lack any sort of safe handoff or failover in case the driver is determined to be non-responsive (this is all of them, really) then the second option, where the human is the primary driver and the L2 system is there to compensate for any driving mistakes, seems like the much better option.

Of course, there are a lot of important factors to figure out here, too. So, if we decide that there may be reasons why a person in the driver's seat may remove their seatbelt — again, not good reasons, but ones we know humans will absolutely try to do — how do we manage this to prevent actual abuse?

Should there be a time limit? Like, say, you undo your seat belt and the car delivers a loud warning tone and a message with a countdown, perhaps? Maybe you get a maximum of 15 seconds to get that jacket off before the driver assist system shuts down?

Something like that could help make a bad idea that so so many people do a bit safer, couldn't it? But does this encourage bad driving behavior further? Or does it make sense to accept some degree of likely dumb human behaviors if there's reasonable ways to compensate for it?

The truth is, I don't know for sure. These are not easy problems to solve, and I'm not sure these decisions should be left up to the automakers. We, the drivers, should be the ones who decide how we want driver assist systems to function, and we should establish a set of preferred, expected behaviors so we know how all these various systems will react.

This is, of course, far easier typed than done. But that doesn't mean it's not a better way forward.

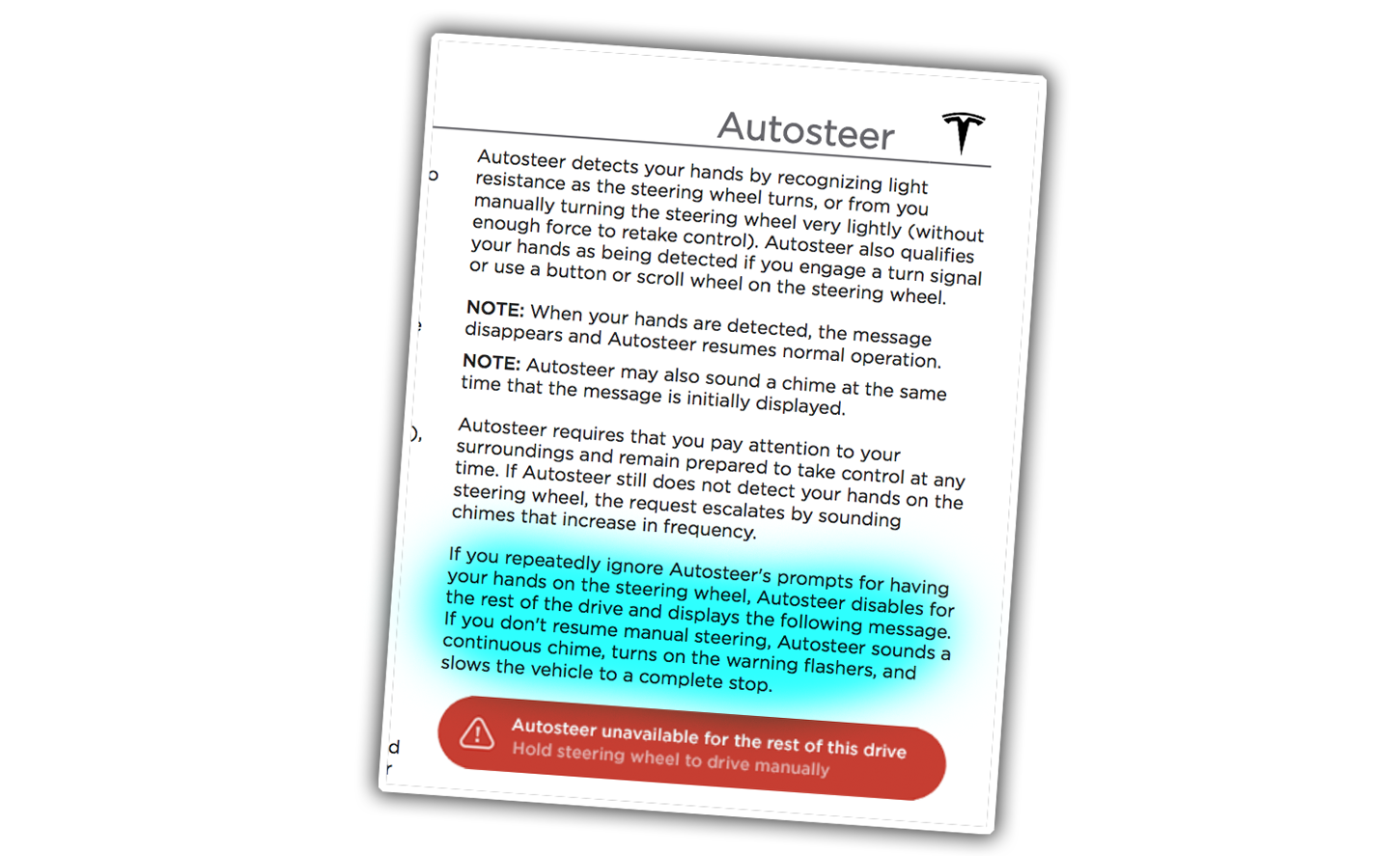

This will change when we have better failover or handoff solutions. Current methods will not cut it. For example, here's how Tesla's Model 3 owner's manual explains what happens when the Autosteer system does not detect a driver's hands on the wheel:

The car disengages Autosteer, puts the hazard lights on, and comes to a complete stop. This may be adequate for low-speed city streets, but on a highway or 2-lane back road coming to a dead stop in the middle of the road is an absolutely terrible plan.

I should mention Ford's system doesn't do any better, nor GM's SuperCruise, nor anyone's, at least not so far.

None of this is easy. There are a lot of things that need to be decided, behavior-wise, of these automated systems, and, again, it's too important to let any one company have the final say here.

While I am not fond of Level 2 semi-automated driver assist systems because of the inherent issues that the better they get, the more confidence drivers put into them, which means less vigilance, which means more trouble when the driver's input actually is needed, I can see the potential of these systems to make driving safer in a lot of contexts.

And part of this process is deciding what makes sense for disengaging these systems — a butt in the drivers' seat feels like an absolute, but maybe for seat belt fastening, some buffer period is reasonable?

It's worth talking about, and I look forward to hearing what all of you clever people think.