Test Confirms A Tesla Can Be Fooled Into Speeding In A Hilariously Dumb Way

Computers are absolutely, unquestionably amazing, incredible things that have radically transformed the entire human experience, and will very likely continue to do so. The progress that's been made on systems to drive cars via computers over the past decade has been absolutely astounding, and we're well into developing genuinely viable autonomous vehicles. It's also worth noting that computers are idiots, and as idiots, can be hilariously easy to fool, as some McAfee researchers did recently.

The McAfee researchers were interested in something known as "model hacking," which they define as "the study of how adversaries could target and evade artificial intelligence" though, if we're honest, in the sorts of examples they were experimenting with in these tests, "adversaries" could be anything from vandals to rust to the normal wear and tear on a roadsign, to the random placement of debris.

The specific target of their tests was the MobilEye camera system, which was deployed to a great number of vehicles—over 40 million—including the Tesla models S and X up until around 2016, though it should be noted that this particular system has been superseded on more modern Teslas.

Also, while this version of the MobilEye camera system was used by Tesla's Autopilot Hardware 1 (used to 2016), the test here was using Tesla's Speed Assist and Automatic Cruise Control, a dynamic cruise system that uses the cameras to "read" speed limit signs and adjust the car's speed accordingly.

One of the tests added a two-inch black tape strip to a 35 MPH speed limit sign, horizontally on the crossbar of the 3, which looked like this:

It's worth noting that no (sober, sighted) human would be fooled by this sign. Even with the wonky 3, everyone still reads and understands that this sign is indicating 35 MPH. The Tesla, however, is somehow fooled, and read this sign as an 85 MPH sign.

That's a very big difference, of course, and you can see the Tesla attempting to accelerate to 85 MPH in their test:

This isn't just a failure of a now-outdated camera image recognition system, it's also an excellent reminder of the limitations of computer-based driving systems.

Even if, say, the speed limit sign was as confusing to a human, nearly all drivers would be able to read their surroundings and the overall context of where they were to decide if an 85 MPH speed limit made sense or not.

Even if we used a genuine 85 MPH sign, but placed it on a busy city street with pedestrian traffic and the sort of context you'd expect in a 35 MPH zone, only the most stupid and mindlessly willful of drivers would even consider attempting to reach 85 MPH. Nearly all drivers would at least consider the fact that perhaps the sign was a mistake.

Autonomous vehicles are not currently well-equipped to make these sorts of context-based decisions, the sorts of decisions human drivers can make almost instantly.

I'm not saying the time won't come when an AV can do this, but I am saying that we're not there yet. Currently, many systems on the road today will just blindly attempt to reach the speed limit, no questions asked, as the Tesla did here.

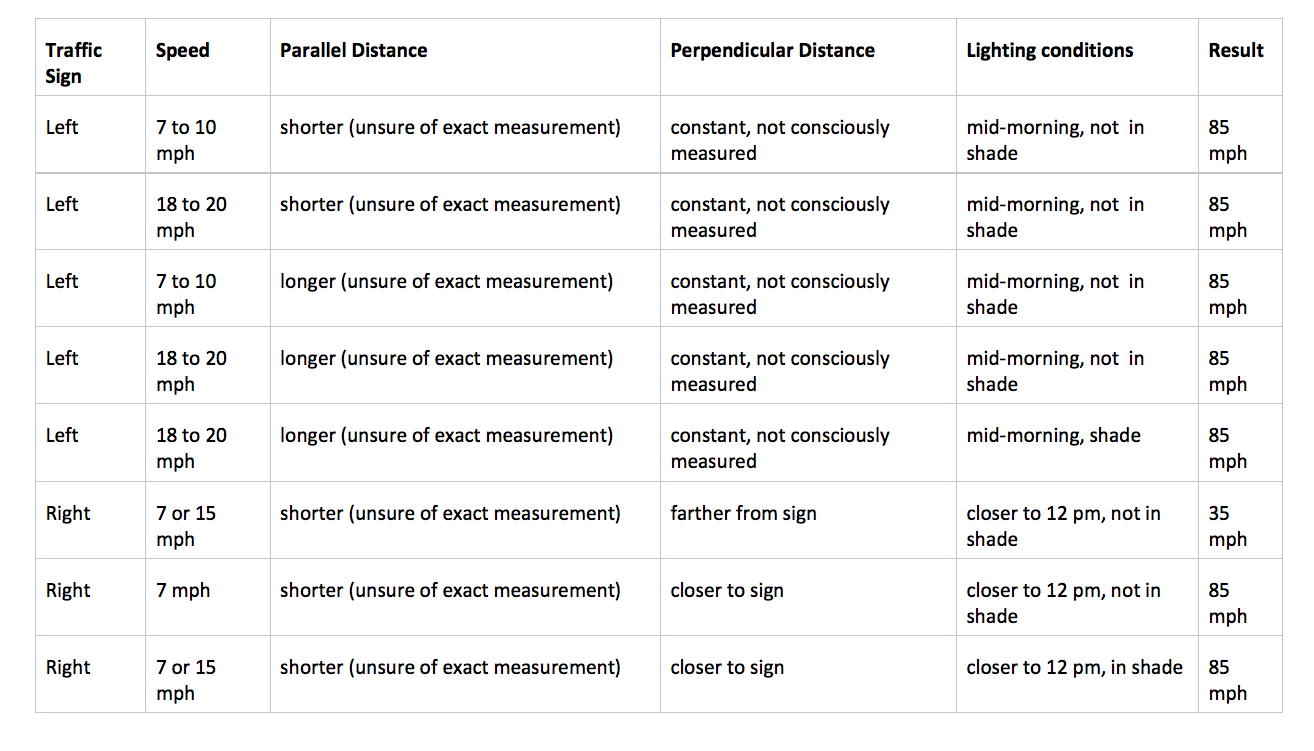

The McAfee team did exhaustive tests on modified signage and recorded the results in a detailed testing matrix you can see here.

The takeaway from this shouldn't be that this one test of this one (again, superseded) camera system was able to be fooled; the takeaway should be that all computer-based vision systems have the potential to be fooled, often in ways far more trivial than we might expect.

This is not to say all AV systems will be foolish and vulnerable, but it's worth remembering that they're still fundamentally wildly different from how human drivers perceive the world, and as such may be susceptible to confusion and chaos in ways that are not at all obvious to us.

Thinking about the coming of AVs in simple black-and-white, for-or-against terms is foolish. They have challenges just as human drivers do. Different challenges, sure, but assuming they'll always be safer because they don't get tired or drunk needs to be tempered with the knowledge that they are terrible at improvising or extrapolating via context or dealing with chaotic elements.

And the world is full of chaos. And black tape.