Robot, Take The Wheel Is The Roadmap You Need To Navigate The Autonomous Car Craze

We may receive a commission on purchases made from links.

The holidays are here, and people are wondering which books they should buy their friends and family. There are lots of great car-related ones out there (I just finished Where the Suckers Moon: The Life and Death of an Advertising Campaign—it's about Subaru marketing. It's good), but right now, I'd like to focus on Robot, Take The Wheel. It's a 2019 nonfiction comedy (if that's not an actual category, it is now) about autonomous cars written by our very own writer, artist, and comedian, Jason Torchinsky. It's damn good.

I'll begin by saying I work with Jason, so this writeup will be more biased than even a car review written by a journalist lavished by an automaker with boatloads of shrimp, fine wine, and a fine five-star hotel. Still, Robot, Take The Wheel: The Road To Autonomous Cars And The Lost Art Of Driving has 4.5 stars on Amazon, so my love for this book isn't solely a product of blinding bias.

With that out of the way, let's talk about what makes this book special in my eyes, and that is the fact that it takes a mystical concept with a high tendency to overwhelm, and condenses it into something humorous and relatable. Jason does this by describing the history behind previous "autonomous" vehicular efforts (like horses!), talking in laypeople's terms about the tech behind how future autonomous cars might work, discussing the ethics related to self-driving cars, exploring the tremendous and varied roles that truly autonomous cars could assume in society, pointing out potential pitfalls of autonomobiles, and above all, trying to understand how humans—both on the societal and personal level—will interact with cars that drive themselves.

Autonomous Vehicles Have Been Around For Centuries If You Think About It

Among my favorite parts of the book is the talk about the history of autonomous cars. While you may be thinking that such a history must be rather short considering how new and novel the concept is, the reality is that semiautonomous vehicles have exited for a long, long time. You just haven't realized it.

Early in the book's first chapter, "We've Been Here Before," Jason outlines the Society of Automotive Engineers' six levels of driving automation, and then talks about how the concept of autonomous vehicle technology is actually really, really old. Hell, horses are a great example, as Jason points out:

A horse inherently knows how to stay on a road, follow a path, avoid obstacles, stop if confronted with confusion or danger, make turns, look for potential hazards, and so on. What the horse relies on the human for are inputs regarding the desired speed of travel and guidance to maintain a proper path.

[...]

We forget just how much natural processing an equine brain is doing to drag a streetcar along a path—it's essentially what we're currently trying to make automated (AVs) vehicles do.

Then, after what I felt was a bit of a long discussion on early automobiles that could have been a bit better integrated into the book, Torchinsky gives other examples of early semi-automation, including railroads:

Railroads are a form of mechanical driving semi-automation; the rails take over the steering, navigation, and lane-keeping duties of a vehicle, a significant portion of the driving task

[...]

Railroads were humanity's first successful deployment of a semiautonomous vehicle, and it remains a staggering success.

And then he talks about fascinating Whitehead Torpedo built in the 1860s:

The first vehicle capable of sensing and reacting to its environment wasn't a land vehicle, and it couldn't carry people, just cargo, and that cargo was limited to explosives designed to blow up boats. The vehicle I'm talking about is a torpedo.

[...]

...it could keep to a constant, set depth under the surface and it could stay on a fixed course toward its target. Together, these were the makings of the first crude guidance system, and the first time any inanimate object could really control its direction and compensate to maintain it, even with environmental inputs acting upon it.

To do this, Whitehead installed to pieces of equipment in the torpedo: A horizontal rudder controlled by pendulum balance (to maintain depth) and a hydrostatic valve (a one-way, pressure-relieving valve), and a gyroscope system driving a vertical rudder to keep it on course.

Jason then dives deeper (get it?) into how the torpedo actually worked, before discussing other autonomous vehicle projects a bit more in line with what engineers are talking about today—things like Mercedes Benz's "VaMoRs" autonomous van project from 1986 and its PROMETHEUS project, as well as the 2004 DARPA Grand Challenge.

By providing a clear roadmap of how the idea of an autonomous vehicle has morphed from something as simple as a horse or railroad into an actual vehicle with loads of sensors, Jason helps to demystify something that—because it's usually only talked about in the context of modern vehicles with complex sensor suites—tends to confuse the crap out of people.

How Autonomous Vehicles Work

Speaking of sensor suites, the book also includes a chapter titled "How Do They Work, Anyway?" in which Jason describes, in laypeople's terms, the sensors that go into self-driving cars. It's not all that technical, so if you're an enginerd looking for deep explanations on how every sensor functions, this probably won't satisfy you. For anyone else, it's a nice, easily-understandable look at what goes into modern self-driving vehicles, including ultrastonic sensors, cameras, LIDAR, Radar, GPS, and how the inputs from the sensors are combined in what some call "sensor fusion" to yield a "composite image of the surrounding reality."

For example, Jason talks about how self-driving cars use cameras to understand the surroundings, writing:

When processing images from the camera, the car's artificial vision system has to look out for and identify a number of things:

Road markings

Road boundaries

Other vehicles

Cyclists, pedestrians, pets, discarded mattresses, and anything else in the road that is not a vehicle

Street signs, traffic signs, traffic signals

Other cars' signal lamps

To identify these objects and people, the camera systems must figure out which pixels in the image represent background and which represent things that need to be paid attention to. Humans can do this instinctively, but a machine doesn't inherently understand that a 1600 by 1200 matrix of colored pixels that we see as a Porsche 356 parked in front of the burned remains of a Carl's Jr. is actually a vehicle parked in front of a subpar fast-food restaurant that fell victim to a grease fire.

To get a computer to understand what it's seeing through its camera, a number of different methods have to be employed. Objects are identified as separate from their surroundings via algorithms and processes like edge detection, which is a complex and math-intensive way for a computer to look at a given image and find where there are boundaries between areas, usually based on differences in image brightness between regions of pixels.

[...]

Once individual objects are separated from their backgrounds, they need to be identified. Size and proportion are big factors in this, since most cars are —very roughly—similarly sized and proportions, as are most people or cyclists and so on.

Jason discusses about the limitations of these technologies (like dirt that could obstruct sensing capabilities), he talks about why some are using Lidar and some aren't, and he relates it all back to what the average person understands: humans using various sensory inputs (like our eyes and ears) to inform our inputs to into the car to keep it centered in the lane and driving down the road safely.

Ethics

One of the more fascinating topics that Jason tackles is ethics. For so many, the concept of self-driving cars is not just a confusing one, but a scary one. What if the car makes a bad decision? Heaven forbid, what if it and its autonomotive compatriots take over the world and make us all grease their zerk fittings against our wills?!

Okay, so Jason focuses more on the former question than the latter, but it's still an important and enrapturing discussion.

With conventional, human-driven cars, we take a lot of time and effort to train the organic computers that drive the cars to understand that driving like an idiot or with the intent to cause harm is very, very bad. We do this through culture, religion, education, and our legal system...But for machines, we'll need to codify these ideas.

Jason then mentions the Three Laws of Robotics from science fiction writer Isaac Asimov:

1. A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2. A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

3. A robot must protect its own existence as long as such protection does not conflict with the First or Second Law

A bit later, Asimov added a zeroth law that outranked the other three:

0. A robot may not harm humanity, or, by inaction, allow humanity to come to harm.

Jason also discusses the Trolley Problem, in which a vehicle might have to choose between killing few and killing many. He ultimately thinks the problem, often cited as a major sticking point in autonomous vehicle development, is a bit silly, writing:

The truth is that, in reality, I don't think the trolley problem is really a likely dilemma that autonomous cars will face. Sure, they may end up in situations where sacrifice of life is unavoidable, but the idea that the robotic vehicles will have access to all the information that makes up the trolley problem—the number of passengers in the vehicle, specifically—is by no means assured, and as such is not likely to be a factor in the cars' decision making.

The book even includes the German Federal Ministry of Transport and Digital Infrastructure's twenty "ethical rules for automated and connected vehicular traffic," the very first set of autonomous car ethical rules set by any country. Here's Jason's summary of those rules:

It's suggested that robotic vehicles are actually an ethical

imperative if they prove to be safer than human-driven cars,

although they also admit that forcing people to use them is

ethically questionable (rule 6).

Human life has precedence over animal life or property

damage (rule 7).

All human lives have equal value, and no specific information

about the people can affect their comparative "worth." This

is good to hear, as it addresses my recurring nightmare that

robotic vehicles will decide if you're worth saving based on

your credit score (rule 9).

Situations like the trolley problem are addressed in several

of the rules (rules 5, 8, and 9) and it's suggested that when

these dilemmas do arise, an independent agency should be

created to evaluate the situations and attempt to "process the

lessons learned."

Semiautonomous systems that require a human to instantly

take control of the vehicle in emergency situations (Level

2 autonomy) are clearly discouraged, and possibly banned

(rule 17). As you can see, Germany agrees with my assessment

in Chapter 4 that semiautonomy sucks.

Liability and accountability must be clearly communicated to

a vehicle's passengers (rule 16) and in the case where the car

is under autonomous control, liability is governed by the same

sort of rules as in any product liability situation (rule 11); that

means the company that made/sold the car is responsible.

Regarding the possibility of malicious hacking, the

manufacturers of the robotic vehicles are responsible for keeping

their vehicles secure and safe as much as possible and as actively

as possible (rule 14). The rule actually suggests that automated

driving is only justifiable if the vehicles can be made secure,

IT-wise. Ideally, this means companies face severe penalties or

even outright banning if they cannot keep their systems secure.

The ethical component of self-driving vehicle development is so important, and this book does a good job helping break it all down in simple terms.

Design

"They Shouldn't Look Like Cars" is the title of chapter seven, in which Jason, an artist who regularly writes detailed design breakdowns of new vehicles, talks about why self-driving cars—or Robotic Transportation Machines, as he likes to call them—should look significantly different than vehicles on the road today.

And if they should look anything like cars currently on the road, those cars should be vans, with Jason writing:

The idea that everyone should be facing forward, with attention focused on the large window at the front of the car really only makes sense if a human is driving the car.

[...]

Robotic vehicles should be designed from the inside out, and those insides should contain the maximum volume of space possible for the vehicle's size. The best way to do that is with boxy shapes, which means that the ideal baseline form for a robotic vehicle would be a van

Specifically, Jason thinks Japanese Kei-class vans are a good place to look if you want an idea of how autonomous vehicles design could turn out in the future

The reasons why cars like these thrive in Japan—and pretty much only Japan—are interesting, especially in the context of how people live in very dense metropolises. Personal space in a city like Tokyo is at a premium, especially private personal space. A car becomes something more than just transport in this context; it becomes a mobile bubble of private space that may be incredibly hard to find otherwise.

Another fascinating concept he tackles is the aggressive design language that's been spreading like wildfire, of late:

So when you see an intimidating, angry looking car, the desired result is that you, at least on some unconscious level, ascribe those same traits to the driver. But what happens when there isn't a driver? Are people going to want angry and powerful looking cars that drive themselves? The entire context of the look of the car changes once we remove the driver from the equation. A person driving an aggressive looking car may appear intimidating, at least on some level (the owner hopes), but our knowledge that a human being is in control of the thing tempers our reaction. Centuries of human culture have given us at least some sense of understanding about the driver's possible motives, and we all have, collectively, enough trust in human society and the rule of law to accept that, most likely, this person means us no harm

Aggressive styling will, Jason predicts, go away in favor of happier looking cars, at least for the first couple of generations of self-driving cars. Because many people just don't trust robots, especially mean-looking ones:

But once we take the driver out of the equation, I think there's more harm that can be done. A robotic vehicle designed to look aggressive is likely a very bad idea, at least from a public acceptance standpoint. We don't have centuries of experience to condition us to trust robots. In fact, most of the popular culture surrounding robots contains a significant number of stories designed to elicit fear and distrust.

Status, Jason also argues with a number of examples including the poorly-selling but brilliant Tata Nano (which was marked as a really cheap car—something that nobody really liked), will still be a factor even when people stop driving their own cars:

A safe bet is something that has always been important to car buyers: status. A car that telegraphs how much disposable income you have, accurate or not, has always been in demand.

[...]

This actually works well for an interior-focused vehicle, as there's already a lot of experience building luxury-focused vehicles for wealthy people who never intend to drive themselves. From the earliest chauffeur-driven town cars to modern Mercedes Sprinter van conversions that are designed to replicate private jet interiors, for decades the rich have been exploring how to enjoy cars without actually driving them.

The Tremendous Potential of Autonomous Cars

There is so much more to Robot, Take The Wheel, but I'll finish by describing a fun chapter in which Jason thinks outside of the box about various uses for self-driving cars.

He talks about vehicles that can expand your car's storage capacity and even tow your car:

these vehicles could be tasked with following your human-driven car as well, expanding your cargo capacity and acting as your own personal support vehicle. They could even tow a human-driven car that has experienced mechanical failure.

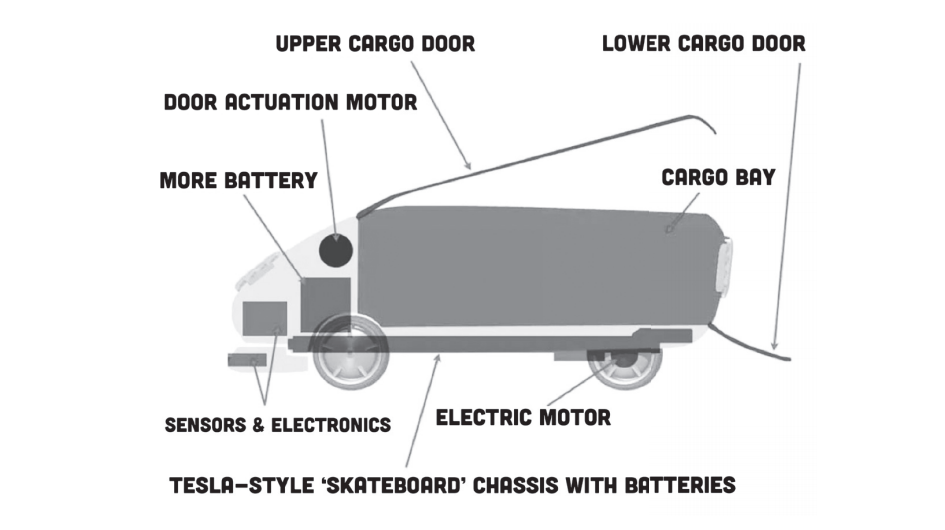

He goes into cargo-only vehicle design:

I mentioned the concept of a cargo-only vehicle a few chapters ago, but it's worth looking at its design in more detail. A cargo-only autonomous vehicle, essentially an errand-running robot, would essentially be a wheeled cargo box. As such, it could be much smaller than a conventional car, about the size of the bed of a pickup truck. In fact, the cargo area should be about this size, to allow for standard sheets of plywood and other commonly purchased and standardized larger-scale objects. The design of such an errand-bot could be pretty basic, really:

He even talks about how road trips might work when cars are driving themselves, writing:

You could download entire, curated road trips. Let's say you wanted to take Charlie Day's Amazing Corn Dog Tour Of America, a road trip he took and recorded where he traversed thirty-seven states to find the best corn dogs in the US. You could download the trip, perhaps with some sort of audio commentary track or music playlist, and set off: the GPS path of the original trip would play, complete with stopping at selected corn dog palaces.

Jason also talks about how self-driving cars will be the death of the "journey" in that they will turn "the world to a series of destinations instead of an entire continuum of places, people, and experiences."And he admits that he himself wants to continue driving for as long as he lives. "I'd say you can pry my steering wheel from my cold dead hands," he writes, "but the truth is I can't think of a worse way for either of us to spend an afternoon."

There's so much more to the book, like Jason discussing why semiautonomy—where a driver can let go of controls, but must be ready to take hold of them when the vehicle requests (this current system is used on a number of cars including Teslas)—is a dumb concept. He suggests that, at some point when autonomous cars become more common, human-driven cars be retrofitted to communicate with car-robots. He talks about why self-driving cars should have lamps on their roofs, and he even describes pranks that teens could play on self-driving cars (cow herding!).

Its' a fun book, and though it's not particularly technical, it delivers exactly what Jason promises in the book's introduction, which is:

It's not a book about the details of the technology, because that changes so fast and so many people so much smarter than me can write those books. This book is essentially a giant thought experiment, where we'll try and imagine what the coming of autonomous vehicles means to us; how we'll get along with the robots that will take over our cars' jobs; what these things will look like; what sorts of jobs they may do; what we can expect of them; how they should act, ethically; how we can have fun with them; and how those of us who love to drive, manually and laboriously, can continue to do so.