NHTSA Opens Investigation Into Tesla Autopilot-Related Crashes Into Emergency Vehicles

After a series of Autopilot-controlled Tesla on emergency vehicle crashes, the NHTSA decides it's time to know more

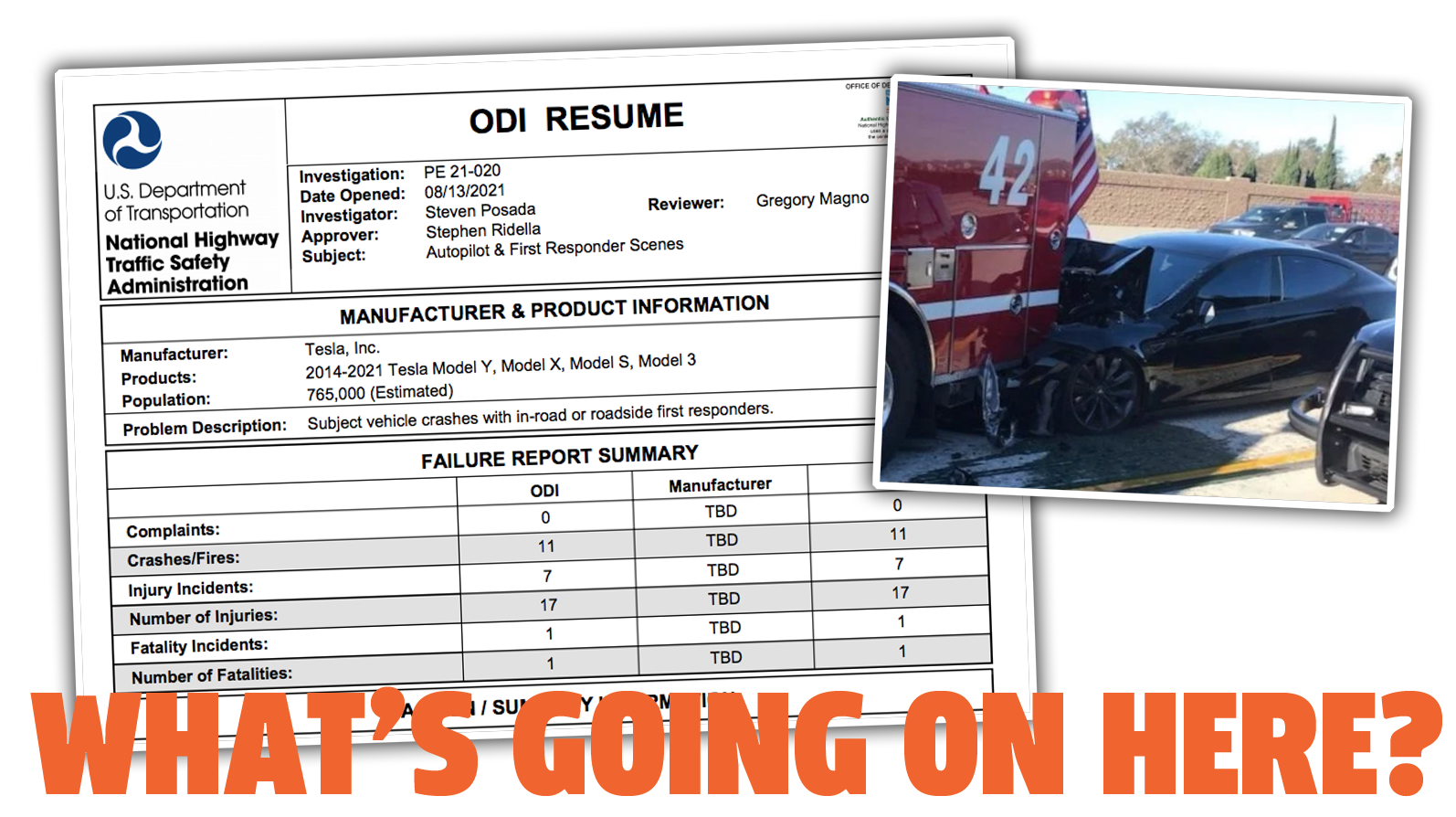

The National Highway Traffic Safety Administration's (NHTSA) Office of Defects Investigation (ODI, FYI) has opened an investigation into a series of Autopilot-controlled Tesla crashes that involved emergency vehicles dealing with other incidents. The investigation has specifically targeted 11 crashes, which caused 17 injuries and one fatality.

Here's the summary of the investigation from the ODI:

Since January 2018, the Office of Defects Investigation (ODI) has identified eleven crashes in which Tesla models of various configurations have encountered first responder scenes and subsequently struck one or more vehicles involved with those scenes. The incidents are listed at the end of this summary by date, city, and state.

Most incidents took place after dark and the crash scenes encountered included scene control measures such as first responder vehicle lights, flares, an illuminated arrow board, and road cones. The involved subject vehicles were all confirmed to have been engaged in either Autopilot or Traffic Aware Cruise Control during the approach to the crashes.

...

ODI has opened a Preliminary Evaluation of the SAE Level 2 ADAS system (Autopilot) in the Model Year 2014-2021 Models Y, X, S,and 3. The investigation will assess the technologies and methods used to monitor, assist, and enforce the driver's engagement with the dynamic driving task during Autopilot operation. The investigation will additionally assess the OEDR by vehicles when engaged in Autopilot mode, and ODD in which the Autopilot mode is functional. The investigation will also include examination of the contributing circumstances for the confirmed crashes listed below and other similar crashes.

The investigation will cover all vehicles that can use Autopilot, which covers about 765,000 Teslas on the road today, every currently-sold model that makes up the super-clever acronym S,3,X,and Y.

We've seen crashes of this sort involving Teslas on Autopilot somehow not "seeing" emergency vehicles for years, and this particular kind of failure is one that highlights some very fundamental issues with the current limitations of AI and the fundamental differences between how computers and people approach the task of driving.

In short, computers are idiots. That's a wild oversimplification, but in the context of dealing with the real world, it's not exactly wrong. The sorts of crashes being investigated here — crashes into stopped emergency vehicles with flashing lights and sometimes even "flares, an illuminated arrow board, and road cones" are simply not the sorts of things human drivers wouldn't notice.

AI-based drivers, conversely, don't understand the larger context of the world, and drive more based on sets of rules that react to immediate sensor data—what's called "bottom-up" reasoning instead of human-style "top-down" reasoning, which incorporates an awareness of the overall context of a situation.

This sort of investigation is desperately needed and hopefully will be the first of others. And while I expect there will be some pushback from proponents of automated driving, the reality is that for any sort of AVs to exist, this type of scrutiny needs to happen.

The outcome I'd most like to see is one that acknowledges the inherent problems of any Level 2 system—one that demands a human driver be ready to take control with minimal or zero warning—and an outcome that can push automakers to develop safe failover systems that take a car out of active traffic lanes when it finds a non-responsive driver.